Written by Sam McGeown

on 23/5/2019

Written by Sam McGeown

on 23/5/2019 I ran into this UI bug the other day when I was trying to enable route redistribution on an Edge in a Secondary site of a cross-vCenter NSX deployment.

The Edge itself was deployed correctly, and configured to peer with a physical northbound router, however when I attempted to configure the route redistribution I was unable to do so.

Fortunately, the solution was simple - use the API.

Perform a GET operation to retrieve the current BGP configuration 1 GET https://{{nsxmanager}}/api/4.

I ran into this UI bug the other day when I was trying to enable route redistribution on an Edge in a Secondary site of a cross-vCenter NSX deployment.

The Edge itself was deployed correctly, and configured to peer with a physical northbound router, however when I attempted to configure the route redistribution I was unable to do so.

Fortunately, the solution was simple - use the API.

Perform a GET operation to retrieve the current BGP configuration 1 GET https://{{nsxmanager}}/api/4. Written by Sam McGeown

on 11/4/2019

Written by Sam McGeown

on 11/4/2019Published under Community and Cloud Native

When I started my blog back in May 2007 (12 years ago!) I was running Wordpress, then switched to DotNetNuke, then BlogEngine, then finally back to Wordpress - which I’ve used since 2010. Today I’ve cut over to a new architecture based on Hugo and hosted on AWS using a combination of Route53, Cloudfront and S3.

Why the change? If it ain’t broke… You may well ask why I’ve made the move, or you may not…I’m going to tell you anyway…

When I started my blog back in May 2007 (12 years ago!) I was running Wordpress, then switched to DotNetNuke, then BlogEngine, then finally back to Wordpress - which I’ve used since 2010. Today I’ve cut over to a new architecture based on Hugo and hosted on AWS using a combination of Route53, Cloudfront and S3.

Why the change? If it ain’t broke… You may well ask why I’ve made the move, or you may not…I’m going to tell you anyway… Written by Simon Eady

on 5/4/2019

Written by Simon Eady

on 5/4/2019Published under VMware and vRealize Automation

I recently upgraded an instance of vRA from 7.2 to 7.5 and rather than do it the manual way I used VMware’s vRealize LifeCycle Manager (version 2.0 update 3).

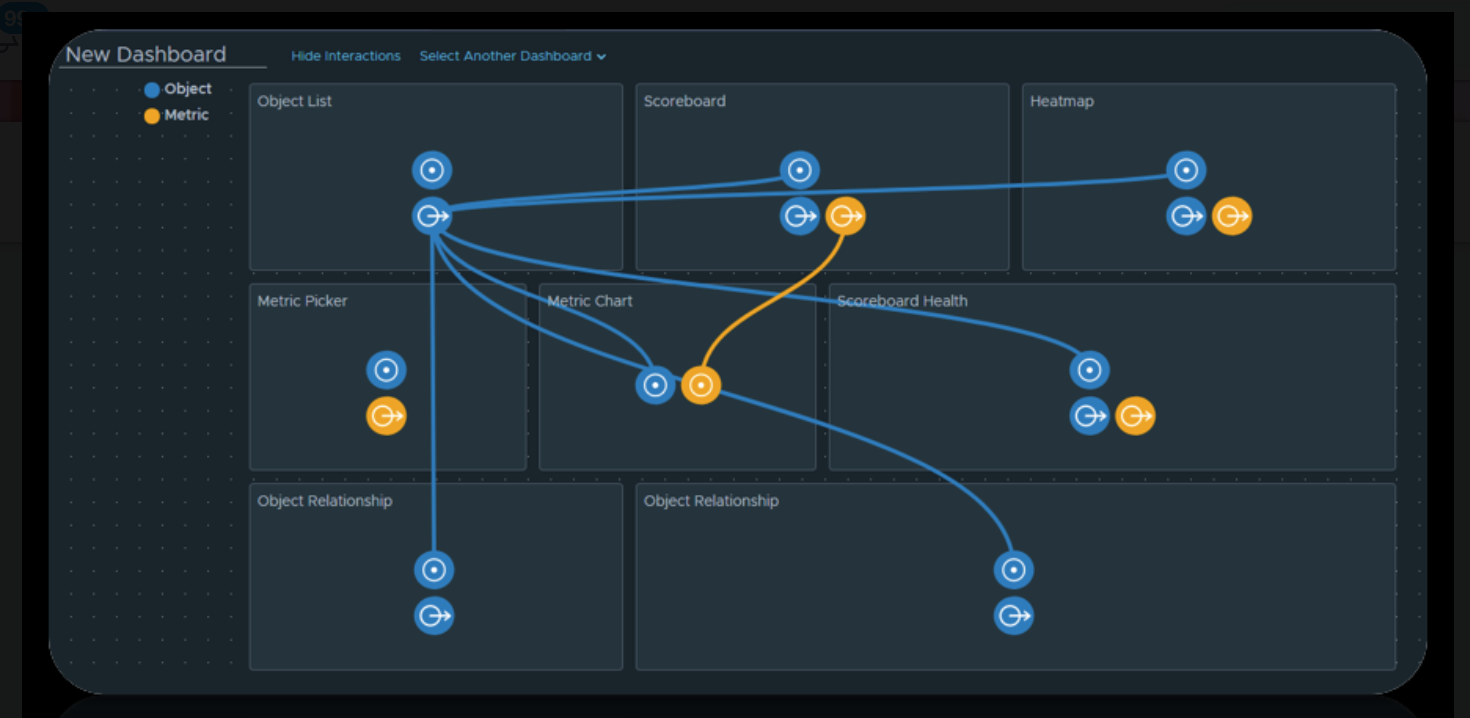

Everything was going great and according to plan, the vRLCM pre-requisites checker made short work of all of the checks you need to do before you start an upgrade of vRA. You can see below vRLCM does a great job of keeping you informed of the current progress and in a really elegant way.

I recently upgraded an instance of vRA from 7.2 to 7.5 and rather than do it the manual way I used VMware’s vRealize LifeCycle Manager (version 2.0 update 3).

Everything was going great and according to plan, the vRLCM pre-requisites checker made short work of all of the checks you need to do before you start an upgrade of vRA. You can see below vRLCM does a great job of keeping you informed of the current progress and in a really elegant way. Written by Sam McGeown

on 14/3/2019

Written by Sam McGeown

on 14/3/2019 Most vSphere admins are more than comfortable with using Update Manager to download patches and update their environment, but few that I talk to actually know a huge amount about the Update Mangaer Download Service (UMDS). UMDS is tool you can install to download patches (and third party VIBs - I’ll get to that) for Update Manager and it’s useful for environments that don’t have access to the internet, or air-gapped, and also for environments with multiple vCenter Servers where you don’t necessarily want to download the same patch on every server.

Most vSphere admins are more than comfortable with using Update Manager to download patches and update their environment, but few that I talk to actually know a huge amount about the Update Mangaer Download Service (UMDS). UMDS is tool you can install to download patches (and third party VIBs - I’ll get to that) for Update Manager and it’s useful for environments that don’t have access to the internet, or air-gapped, and also for environments with multiple vCenter Servers where you don’t necessarily want to download the same patch on every server. Written by Simon Eady

on 8/3/2019

Written by Simon Eady

on 8/3/2019 It has been a few years since I read (and lost) a great article on career progression and personal insight. That article helped me relax into who I am from a professional point of view, but it also challenged me.

I have been in IT for over 20 years now and in truth the first 10 years were not so great (perhaps a story for another time) but it was when I stumbled upon the vCommunity by way of Twitter and then subsequently I attended my first VMUG (in London) which completely challenged and changed my way of thinking and approach to my career.

It has been a few years since I read (and lost) a great article on career progression and personal insight. That article helped me relax into who I am from a professional point of view, but it also challenged me.

I have been in IT for over 20 years now and in truth the first 10 years were not so great (perhaps a story for another time) but it was when I stumbled upon the vCommunity by way of Twitter and then subsequently I attended my first VMUG (in London) which completely challenged and changed my way of thinking and approach to my career. Written by Simon Eady

on 27/2/2019

Written by Simon Eady

on 27/2/2019Published under VMware

For the last month I had been preparing for the VCAP7-CMA Design exam and I am very glad to say I passed on my first attempt.

Oddly I found it slightly easier than the VCP7-CMA but that I suspect is down to the fact I spend a lot of my time designing and implementing solutions for customers as opposed to day to day administration of any given solution.

So what was the exam like?

For the last month I had been preparing for the VCAP7-CMA Design exam and I am very glad to say I passed on my first attempt.

Oddly I found it slightly easier than the VCP7-CMA but that I suspect is down to the fact I spend a lot of my time designing and implementing solutions for customers as opposed to day to day administration of any given solution.

So what was the exam like? Written by Sam McGeown

on 8/2/2019

Written by Sam McGeown

on 8/2/2019Published under VMware

This series was originally going to be a more polished endeavour, but unfortunately time got in the way. A prod from James Kilby (@jameskilbynet) has convinced me to publish as is, as a series of lab notes. Maybe one day I’ll loop back and finish them…

Requirements Routing Because I’m backing my vCloud Director installation with NSX-T, I will be using my existing Tier-0 router, which interfaces with my physical router via BGP.

Written by Simon Eady

on 26/1/2019

Written by Simon Eady

on 26/1/2019Published under Career

It has been a while since I have had time to write a blog post, the last quarter of last year was pretty crazy from a work point of view.

Regardless, it is now a New Year and my tech focus is turning very much on CMP related things particularly vRealize Automation. (I am also very much looking forward to learning more about VMware’s CaS which I saw demo’d at the UK VMUG late last year by Grant Orchard)

It has been a while since I have had time to write a blog post, the last quarter of last year was pretty crazy from a work point of view.

Regardless, it is now a New Year and my tech focus is turning very much on CMP related things particularly vRealize Automation. (I am also very much looking forward to learning more about VMware’s CaS which I saw demo’d at the UK VMUG late last year by Grant Orchard) Written by Sam McGeown

on 21/9/2018

Written by Sam McGeown

on 21/9/2018 Just a quick post today to cover a new vRO action and workflow I’ve uploaded to GitHub that configures vCenter High Availability in the basic mode. This is based on William Lam’s excellent PowerShell module that does the same, but using vRO. I also hope to release a version for the advanced mode based on my PowerShell script in the near future.

TL;DR - package is availabile on GitHub

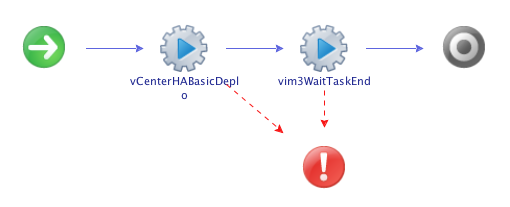

The workflow itself is pretty self explanatory, with my deployment action, which returns a VC:Task, and the standard “wait for a task to end” action.

Just a quick post today to cover a new vRO action and workflow I’ve uploaded to GitHub that configures vCenter High Availability in the basic mode. This is based on William Lam’s excellent PowerShell module that does the same, but using vRO. I also hope to release a version for the advanced mode based on my PowerShell script in the near future.

TL;DR - package is availabile on GitHub

The workflow itself is pretty self explanatory, with my deployment action, which returns a VC:Task, and the standard “wait for a task to end” action. Written by Simon Eady

on 6/9/2018

Written by Simon Eady

on 6/9/2018Published under VMware and vRealize Operations

I did a quick search online and could not find a collated list so, by way of a quick summary from all the VMworld 2018 announcements and comments my good friend and PM for vRealize Operations Sunny Dua has made, I have collated a list of what we can expect to see in the next iteration of vROps (7.0). The list is in no order of interest or importance, some of the mentioned improvements have been long standing requests so enjoy and get hyped (I know I am).

I did a quick search online and could not find a collated list so, by way of a quick summary from all the VMworld 2018 announcements and comments my good friend and PM for vRealize Operations Sunny Dua has made, I have collated a list of what we can expect to see in the next iteration of vROps (7.0). The list is in no order of interest or importance, some of the mentioned improvements have been long standing requests so enjoy and get hyped (I know I am).