When you are using a VMware orchestration platform with an official VMware plugin to manage a VMware product, you don’t really expect to have to fix the out-of-the-box workflows. However, during some testing of some workflows with a client the other day we ran into a couple of issues with the vCloud Director plugin workflows.

When you are using a VMware orchestration platform with an official VMware plugin to manage a VMware product, you don’t really expect to have to fix the out-of-the-box workflows. However, during some testing of some workflows with a client the other day we ran into a couple of issues with the vCloud Director plugin workflows.

Software versions used

- vCloud Director 5.5.1 (appliance for development) and 5.5.2 (production deployment)

- vRealize Orchestrator Appliance 5.5.2.1

- vCloud Director plugin 5.5.1.2

CPU allocations are incorrect for both “Add a VDC”#

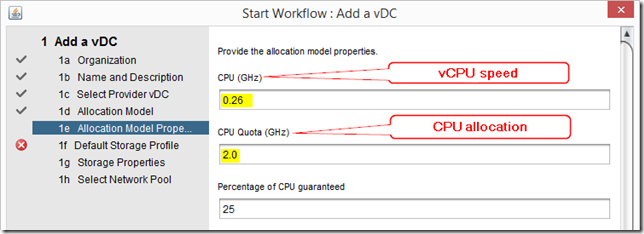

When you provide the CPU allocation model properties for the Allocation Pool model the first problem is decrypting the naming - it doesn’t match the names in the vCloud Director interface!

The “CPU (GHz)” value is the vCPU speed, and the “CPU Quota (GHz)” value is the CPU allocation

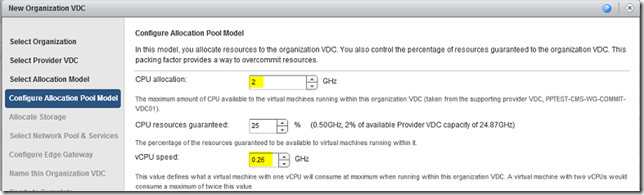

The equivalent in the vCloud Director interface is:

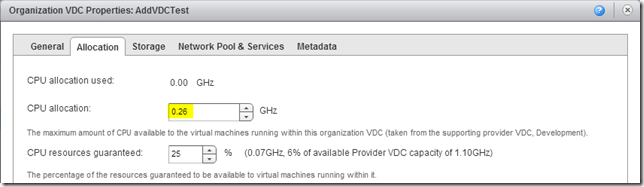

The created VDC has an incorrect value for CPU allocation - 0.26, and though you can’t see it* the vCPU speed is set to 0.26. If you swap the inputs to the workflow around then the value of the CPU allocation is set to 2GHz but the vCPU speed is also set to 2GHz, which is wrong.

* This screenshot was taken from VCD 5.5.1, where vCPU speed is removed, it’s back in for VCD 5.5.2. You’ll have to trust me 🙂

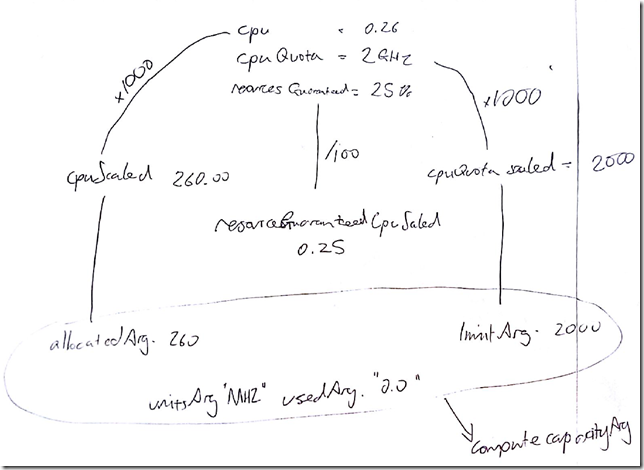

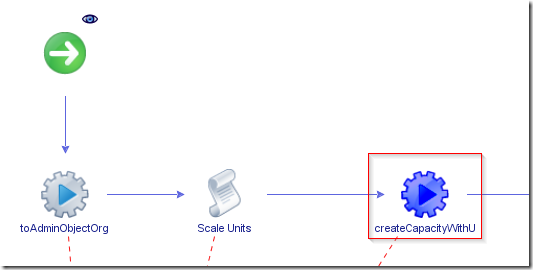

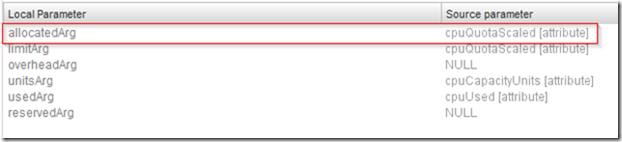

Picking through the Add a VCD workflow is a little bit like following Alice down the rabbit hole but eventually, with the help of a back-of-envelope drawing, I figured out the problem. The first “createCapacityWithUsage” action is used to create a “cpuCapacity” object which is then used as an input for the “createComputeCapacity” action.

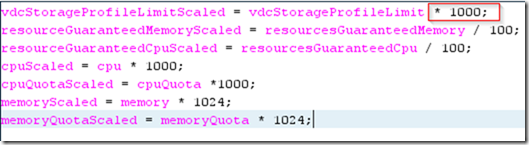

If I trace the variables through the workflow it goes like this:

- cpu (0.26) is multiplied by 1000 to create cpuScaled (260)

- cpuScaled is used as an input - allocatedArg - for createCapacityWithUsage

- cpuQuota (2) is multiplied by 1000 to create cpuQuotaScaled (2000)

- cpuQuotaScaled is used as an input - limitArg - for createCapacityWithUsage

- createCapacityWithUsage has two more arguments, unitsArg (MHz) and usedArg (0)

When the result of createCapacityWithUsage is used to create a VDC, cpuScaled and unitsArg combine to allocate 260MHz - or 0.26GHz to the VDC - which is not the 2GHz I ordered!

The solution is to create a copy of the “Add a VDC” workflow, then edit the first createCapacityWithUsage action and change the source parameter for allocatedArg to be cpuQuotaScaled

Now when the new workflow runs, the CPU allocation and vCPU speed are set correctly.

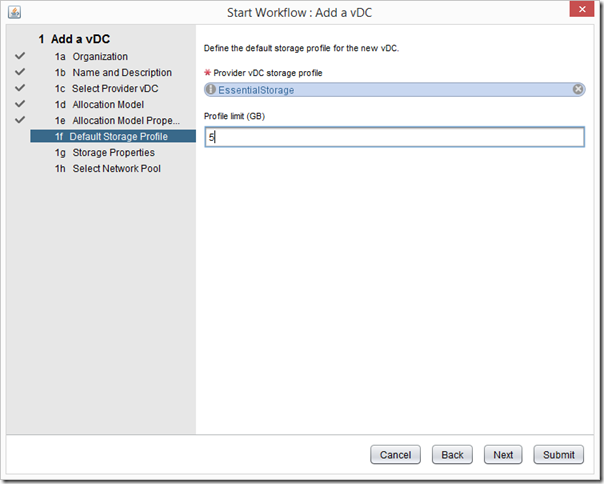

Storage allocation is incorrect for “Add a VDC”#

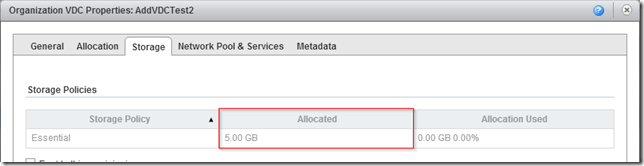

There is also a problem with the size of the storage profile limit when creating a VDC with the default “Add a VDC” workflow - you can see from the screenshot below I am allocating a 5GB profile limit.

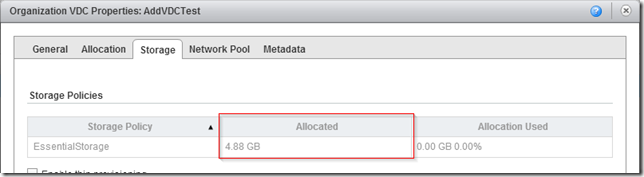

However, when you check the storage allocation it is incorrect - allocating 4.88GB rather than 5GB.

This becomes particularly problematic if you are creating a VDC for a paying customer - “I’ve paid for 5GB, where is the rest of my storage?!”

This is actually pretty easy to fix, since the second action in the workflow is a scripting block called “Scale Units”, which multiplies the storage profile limit by 1000. If you take the 5GB and multiply it by 1000, then divide it by 1024 you get 4.88GB - so we can see where the problem is introduced.

By simply modifying that figure to 1024 we can solve this one, and in the re-run of the workflow the storage allocation is correct

Hope that helps you if you’re using this workflow.

Bonus content…here’s my genuinely on the back of an envelope workings!