[<img class=“alignright size-medium wp-image-3968” src="/images/2014/02/pernixdata1.png" alt=“pernixdata” width=“300” height=“80” since vSphere 6 was released, simply because I can’t afford to wait on learning new versions until 3rd party software catches up. It makes you truly appreciate the awesome power of FVP, even on my less than spectacular hardware in my lab, when it’s taken away for a while.

Now that FVP 3.0 has GA’d, I’m looking forward to getting my lab storage accelerated - it makes a huge difference.

What’s new in FVP 3.0? Well, to quote the release notes:

A stand alone, browser based FVP Management Console.

Support for vSphere 6.0

Performance and scalability improvements.

Ability to rename the PRNXMS database.

Online and offline license activation via the new standalone UI.

Obviously support for vSphere 6.0 was the big one I was waiting for, but don’t discount the rather understated “Performance and scalability improvements”. Not sure if renaming the database is a headline for release, but I’ll let that go. I’m really, really, REALLY hoping the license activation has improved because I found it a little clunky and frustrating before…we’ll see…

Prerequisites#

You need to be running ESXi 5.1, 5.5 or 6.0 (no 5.0 support)

You need a server running 64-bit Microsoft Windows Server 2008 or 2012 for the management server

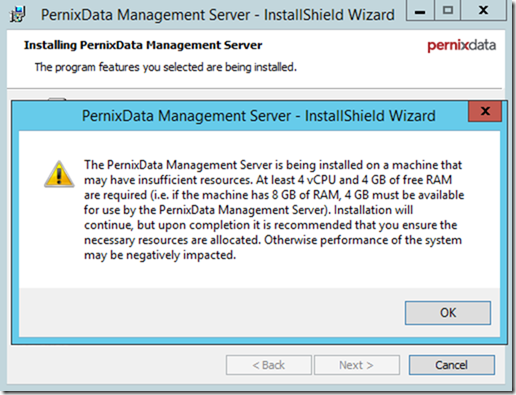

This should have 4 vCPU and 4GB of free RAM for a production installation. It’s possible to install with less in the lab, but…well it will directly affect the performance, there’s no point in scrimping on CPU/RAM for a performance solution. A VM with 4vCPU and 8GB RAM is recommended.

.NET Framework 3.5.1 SP1 or later (activate the feature)

FVP Management Server will install Java SE 7, and Java Runtime Environment (JRE) 1.8u40. The setup program overwrites any existing installation of the JRE so be careful if you’re sharing a server with something else that uses JRE - best practice is to give FVP a dedicated server anyway.

FVP Management Server requires Microsoft SQL Server 2008, 2012 or 2014. This can be SQL Express on a local server, I’ve already got a shared SQL box in my lab running SQL 2014 so I’ll use that.

A service account for FVP to run under, access the SQL database and connect to vCenter Server. I’m using an account I’ve created [email protected] which is added to the local administrators group on my FVP Management server.

This account will also be added to the “Log on as a Service” policy

I have added it to my vCenter appliance as an administrator

I have also granted admin rights on my SQL server (this can be dropped to DBO rights on the Pernix database after installation).

Installing the PernixData Host Extension#

The PernixData Host Extension needs to be installed on all hosts in the cluster that you are activating FVP. You can install using esxcli or using VMware Update Manager. Since I’m only installing on 3 hosts, I’ll use esxcli, but I think that’s probably the threshold for installing manually, any more and I’d go with VUM. Make sure you download the correct package for manual or VUM install.

Copy the extension zip to somewhere accessible by your ESXi hosts. I’ve dropped it on an NFS share that’s mounted on all my hosts.

SSH or console onto the hosts and check there are no old FVP extensions installed

esxcli software vib list | grep pernix

Put the host into maintenance mode (type out this command as the “-” character can be pasted incorrectly!)

esxcli system maintenanceMode set -e true

Install the extension using

esxcli software vib install -d /path/to/extension.zip e.g. esxcli software vib install -d /vmfs/volumes/IX200-Temp/FVP3.0/PernixData-host-extension-vSphere6.0.0_3.0.0.0-38306.zip

Exit maintenance mode on the host

esxcli system maintenanceMode set -e false

Rinse and repeat for all the hosts in the cluster.

Installing the PernixData Management Server#

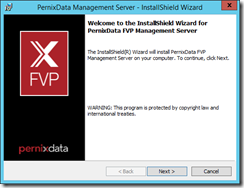

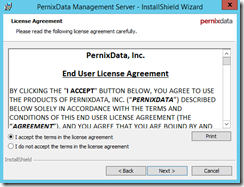

Run the installer and accept the license agreement

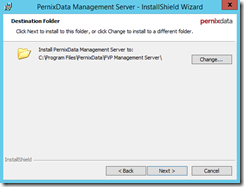

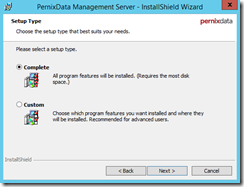

I was unable to change the location of the install - not sure why, but I’ll be reporting it back to Pernix. Select the “Complete” installation to install the Management server and the CLI tools. If you just want the Management Server or CLI tools you can do a custom install.

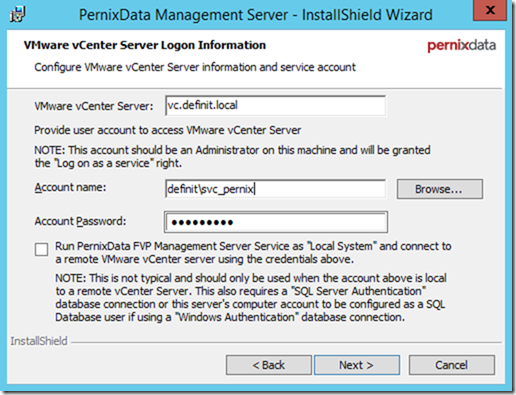

Configure the connection to vCenter Server using the previously created account (make sure to use DOMAIN\user format, a UPN such as [email protected] is not supported).

As noted in the screenshot, you can run the Manager server as “Local System” and enter details for a vCenter Server local user to connect to the vCenter Server, and then configure a SQL Server Authentication user for the database connection. I imagine this may be required in some high security implementations, but it’s more complex and may cause confusion later down the road.

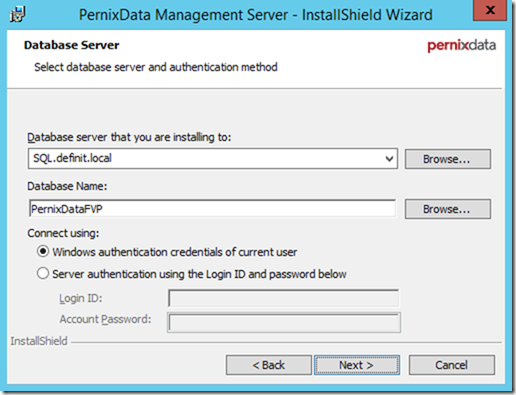

Enter the SQL server details, and either select a pre-created database or enter the name of a new database - I’m going for “PernixDataFVP”. I’m also using Windows authentication to connect as I’m running under the context of the service account.

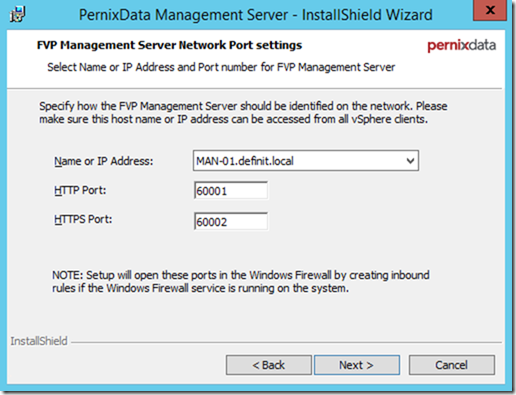

Select the name or IP address of the management server - this is the address vSphere clients must be able to access. Configure the HTTP or HTTPS port if required - I’ve left mine as default.

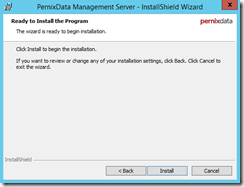

After that, continue through the installer pages until we get to the installation

Because I’m doing an install in my lab I have less than the required RAM/CPU allocated. The Management server will install OK.

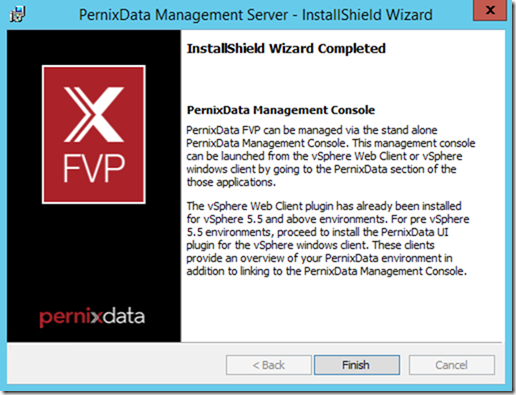

And finish!

Configuring FVP#

So far the install process has been identical to previous versions of FVP - now here comes that new management interface mentioned in the release notes!

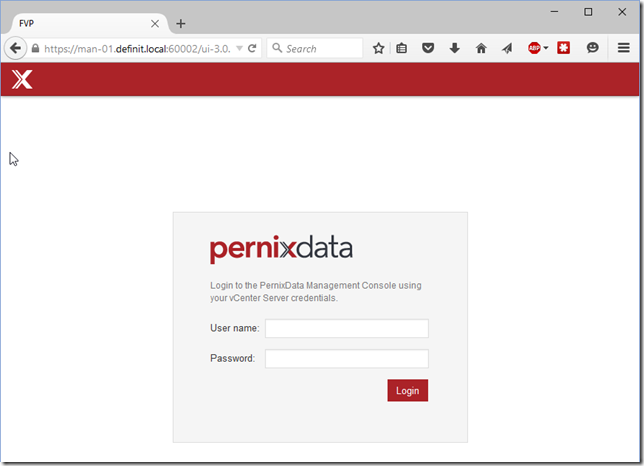

Log onto the PernixData Management Console using the HTTP address we configured earlier. Remember, if you changed ports during the install then you need to change them here:

http://manager-server.domain.local:60001/login

You’ll be redirected automatically the HTTPS port and the login page will load. Log in using the account you configured for vCenter access - in my case definit\svc_pernix.

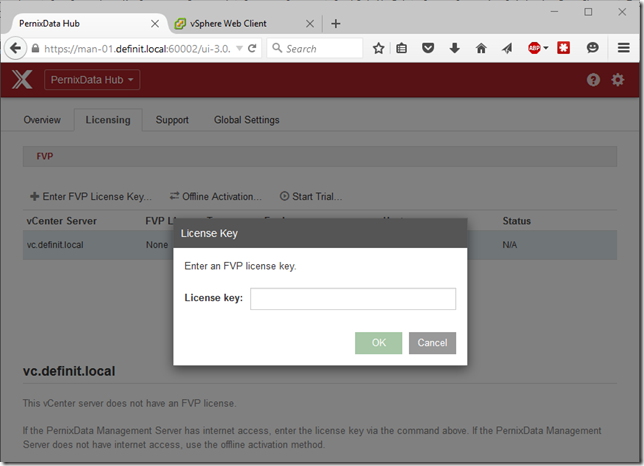

Once you’ve logged on, select the “Licensing” tab, then select your vCenter Server. Click “Enter FVP License Key” and enter your license key:

FVP will then go online and authorise your license…and that’s it, done! This is a MASSIVE improvement on the old method which involve license servers and downloading response files. It’s how it should work - well done Pernix!

Creating FVP Clusters#

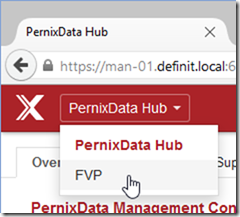

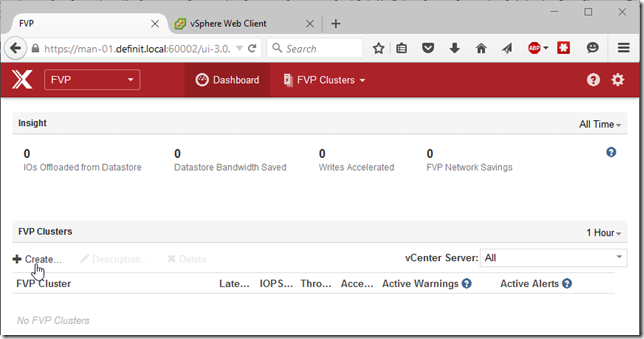

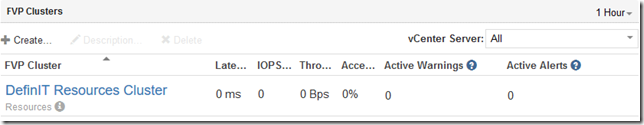

Next, select FVP from the drop down and you can see an overview of your FVP clusters - I’ve not got anything configured yet.

Click “Create” under FVP Clusters to begin the creation of your first FVP cluster (or use the FVP Clusters dropdown)

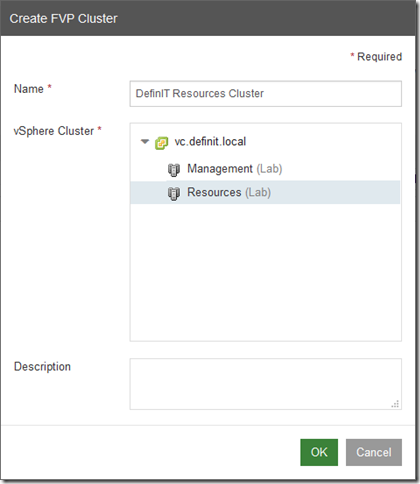

Name your new FVP cluster and select the vSphere cluster you want to enable FVP on:

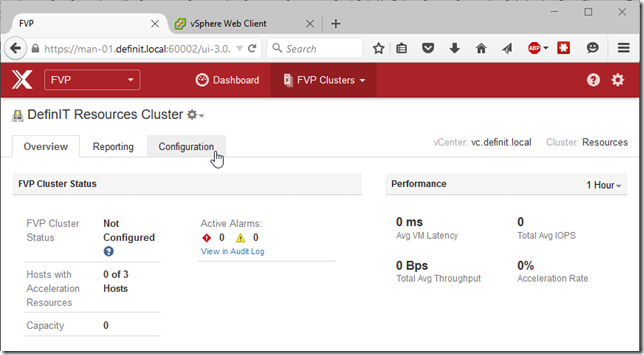

Click on the new cluster to open the cluster details

Configuring Acceleration Resources#

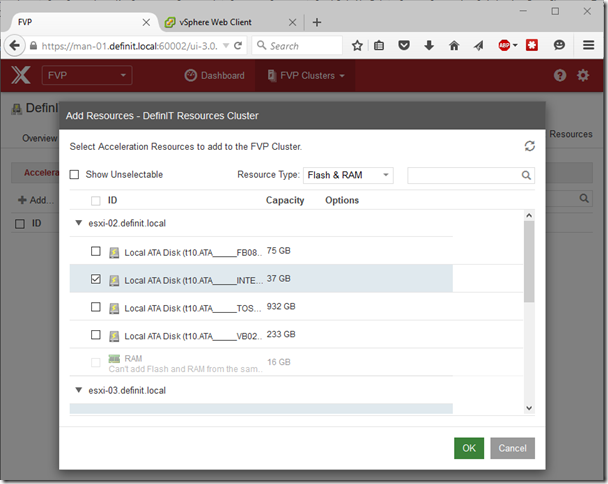

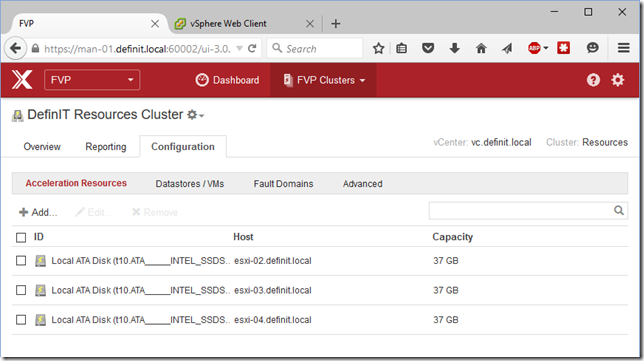

Select “Configuration” to begin configuring acceleration resources. Click “Add…” to select the flash (or RAM) you wish to use. In this cluster I have a 40GB SSD in each host that I will be using to accelerate Read/Write performance. If you wish to use RAM to accelerate your storage then you can allocate a minimum of 4GB, in 4GB intervals, up to 1TB of RAM per host. You cannot use RAM and flash together to accelerate on the same host.

Add Datastores to be accelerated#

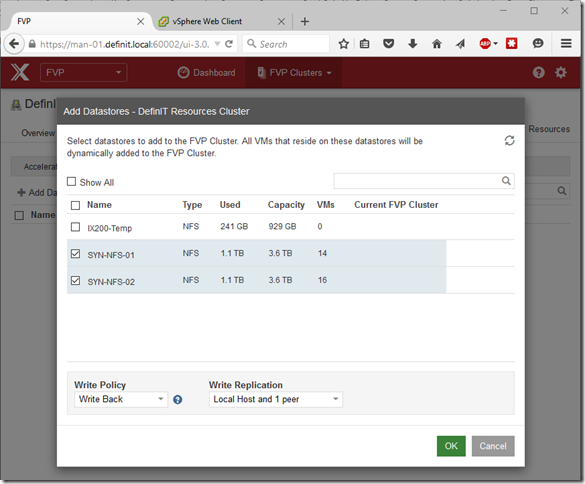

Select the Datastores/VMs tab and click “Add Datastores” to open the datastore dialogue. Select the datastores you wish to accelerate - in my case two NFS datastores on my Synology NAS. Adding the datastore automatically adds all VMs associated with that datastore to the FVP cluster, and any new VMs added to the datastore will receive the datastore’s default policy.

Next select the Write policy - the two options are Write Through, or Write Back. I won’t go into detail on the differences because 1) that would be a blog post in itself, and 2) the legendenary Frank Denneman has already done so here and I’m likely to fall far short of his explanations. I’m going to select “Write Back” with a local and 1 peer copy, to provide write acceleration as well as some data protection.

To add only specific VMs, or to change the Write Policy for individual VMs, you can add VMs individually to the cluster using the “Add VMs…” button. This works in the same way so I won’t go into details, but I like the idea of setting a datastore policy that fits most VMs - e.g. Write Back with Local host and 1 peer - then assigning more peers to VMs where the data is more critical, or Write through to VMs that aren’t in need of write acceleration.

Other configuration#

Fault Domains - configuring a fault domain allows you to replicate write data intelligently - e.g. to ensure a copy of each write is replicated to a different rack, or blade chassis to protect against the failure of such. For my lab, there’s no point in doing that, so I will leave the default Fault Domain in place, with all hosts in it.

Blacklist - if you want to ensure a VM is never accelerated, you can add it to the black list

VADP - Specify VADP VMs in your environment so that FVP works seamlessly with relevant backup processes. VADP VMs won’t be accelerated by FVP.

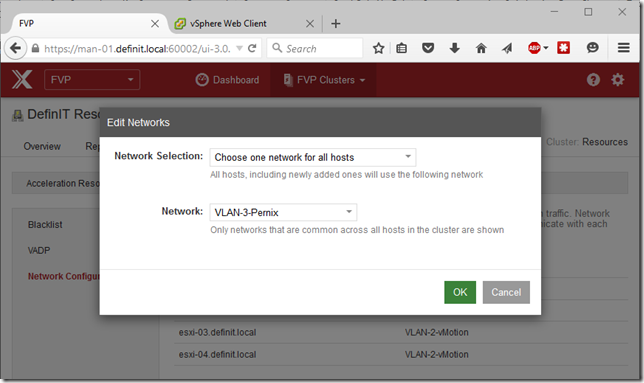

Network Configuration - by default FVP will chose the vMotion network for all of it’s replication traffic, but you can specify a network dedicated just to FVP. Since my lab is all on 1GB networking, I like to dedicate a single 1GB NIC just for FVP rather than sharing with vMotion. To do this, ensure you have a VMkernel on each ESXi host on the network you wish to use, and ensure they can all communicate with each other.

I have a network called “VLAN-3-Pernix” and a VMkernel configured on each host, so I can select “Chose one network for all hosts” in the “Edit Networks” dialogue, then select my network of choice:

Disclaimer: In the interests of transparency, I want to state that I am part of PernixData’s PernixPro scheme, and as such I receive an NFR license and some additional access to briefings. I would also say that I was a fan of FVP long before I was a PernixPro and would still be, even if I wasn’t part of it - it’s just a very good software solution!