One of the cool new features released with vRealize Automation 7.2 was the integration of VMware Admiral (container management) into the product, and recently VMware made version 1 of vSphere Integrated Containers generally available (GA), so I thought it was time I started playing around with the two.

One of the cool new features released with vRealize Automation 7.2 was the integration of VMware Admiral (container management) into the product, and recently VMware made version 1 of vSphere Integrated Containers generally available (GA), so I thought it was time I started playing around with the two.

In this article I’m going to cover deploying VIC to my vSphere environment and then adding that host to the vRA 7.2 container management.

Deploying vSphere Integrated Containers#

VIC is deployed using a command line interface, which deploys a vApp and a container host VM onto your ESXi host or vSphere cluster. There are a LOT of different ways to configure VIC so I strongly suggest you read and digest the VIC Installation Guide. For the sake of simplicity, I’m going to deploy as basic a setup as I can figure out.

The following command is broken down into multiple lines for readability:

./vic-machine-darwin create

-target '[email protected]':'VMware1!'@vcsa.definit.local/Lab

-compute-resource Workload

-image-store vsanDatastore

-volume-store vsanDatastore:Default

-bridge-network '100-VIC-Bridge'

-management-network '1-Management'

-client-network '1-Management'

-public-network '1-Management'

-name 'vic.definit.local'

-no-tlsverify

-force

./vic-machine-darwin create is the executable (for MacOS), followed by the command “create”. No surprises there.

-target specifies the username ([email protected]), password (VMware1!), vCenter Server (vcsa.definit.local) and Datacenter (Lab). These can be specified individually, but I think they make sense as one argument.

-compute-resource specifies the cluster (or resource pool) to deploy to, in this case, "Workload"

-image-store specifies where to store the VM files, container images and everything else required to run VIC

-volume-store specifies where docker volumes are stored, when using the docker volume create command. I'm just specifying a default volume store for simplicity.

-*-network specify each of the four network types for VIC. You can see that I've used '1-Management' for management, client and public traffic, and '100-VIC-Bridge' for the bridge network. Understanding all the networks is really important, so I suggest reading that section of the deployment guide carefully!

-name specifies the name of the vApp and VIC host VM in vSphere

-no-tlsverify allows us to connect to the API without the use of client certificates - again, going for simplicity here not security!

-force specifies that we want to continue on non-fatal errors (like untrusted vCenter certificates)

Running the command:

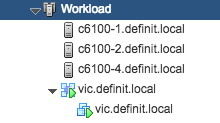

From the output, you can see that my new VIC host has an IP address of 192.168.1.216. If I check in vSphere, you can see the deployed vApp and VIC host:

And I can validate the connection and deploy a photon container using docker commands:

docker -H 192.168.1.216:2376 --tls info docker -H 192.168.1.216:2376 --tls pull vmware/photon docker -H 192.168.1.216:2376 --tls run -i vmware/photon docker -H 192.168.1.216:2376 --tls ps -a

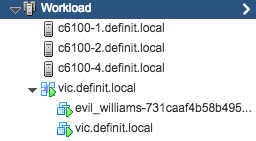

Here you can see the new container, evil_williams is running:

Adding vSphere Integrated Containers to vRealize Automation 7.2 {.brush:.bash;.gutter:.false}#

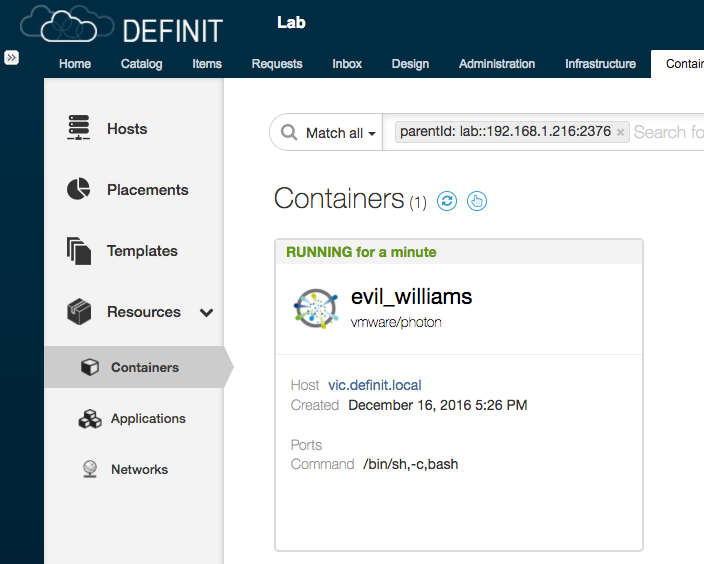

Adding the new VIC host to the Admiral interface that's baked into vRealize Automation is really child's play - enter the address and port. If you remember that we specified -no-tlsverify, this means that we don't need a login credential (i.e. a client certificate) to use the API. We'll also get a certificate not trusted warning when we verify, that's because we self-signed the VIC certificate.

I've created a "Placement zone" - effectively a logical container for the container hosts within vRA/Admiral. You don't strictly need it to add the host, but you will later.

And that's it - my host is added, you can even see that the container we created to validate the VIC installation is actually inventoried:

Hopefully that's a helpful overview of the process of adding VIC to vRA 7.2, over the next few weeks I'm planning on doing some more posts going into more depth as I dig through, so watch this space if you're interested!