This series was originally going to be a more polished endeavour, but unfortunately time got in the way. A prod from James Kilby (@jameskilbynet) has convinced me to publish as is, as a series of lab notes. Maybe one day I’ll loop back and finish them…

Requirements#

Routing#

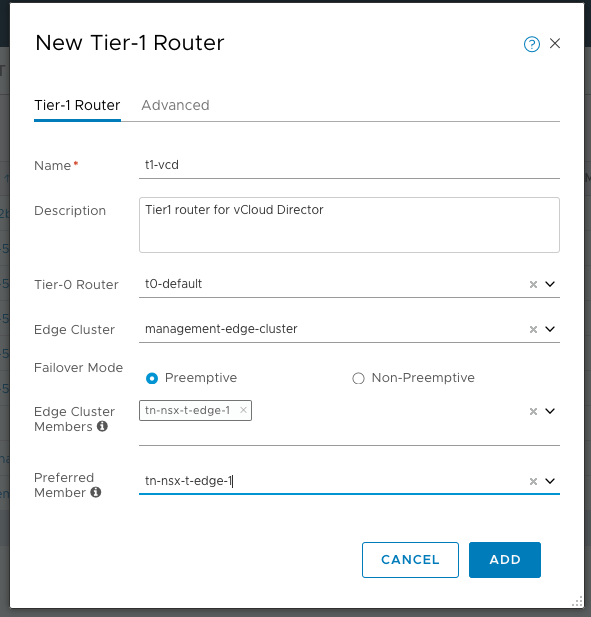

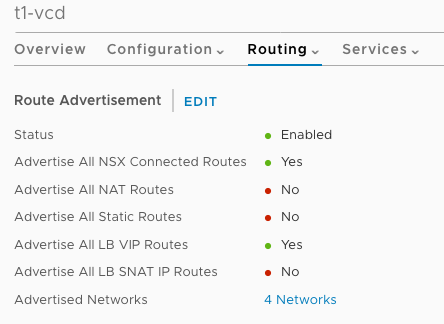

Because I’m backing my vCloud Director installation with NSX-T, I will be using my existing Tier-0 router, which interfaces with my physical router via BGP. The Tier-0 router will be connected to the Tier-1 router, the NSX-T logical switches will be connected to the Tier-1, and the IP networks advertised to the Tier-0 (using NSX-T’s internal routing mechanism) and up via eBGP to the physical router.

The Tier-1 router will be created in Active-Standby mode because it will also provide the load balancing services later.

Logical Switches#

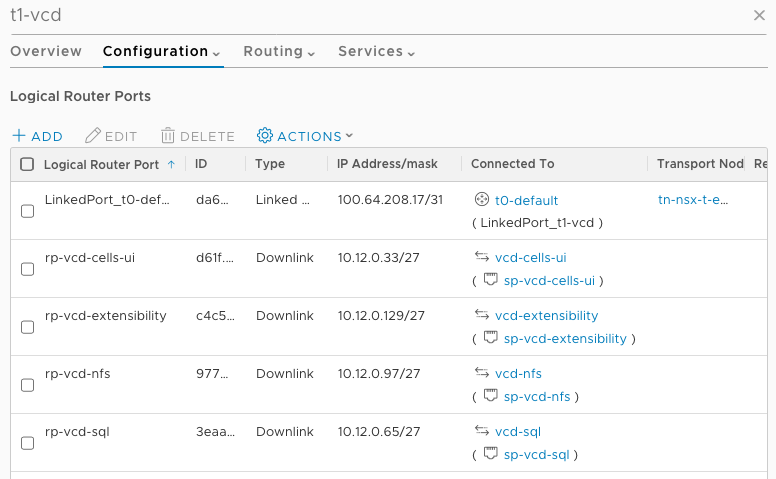

I want to build vCloud Director as many Service Provider customers do, with different traffic types separated by logical switches. I will be using 10.12.0.0/24 and subnetting into some smaller /27 networks to avoid wasting IPs (a typical Service Provider requirement) To that end, I am deploying four NSX-T logical switches:

- vCloud Director API/UI/Console

- vCloud Director SQL

- vCloud Director NFS

- vCloud Director RabbitMQ/Orchestrator

The four logical switches have been connected to the Tier1 router created for vCloud Director, and have router ports configured in the correct subnet

Load Balancing#

There are various load balancing requirements for the full vCloud Director installation, which will be fulfilled by the NSX-T Logical Load Balancer on the Tier-1 router:

- vCloud Director API/UI

- vCloud Director Console

- vCloud Director RabbitMQ

- vRealize Orchestrator

The actually load balancer configuration will be done later on when I have the components deployed.

DNS A and PTR#

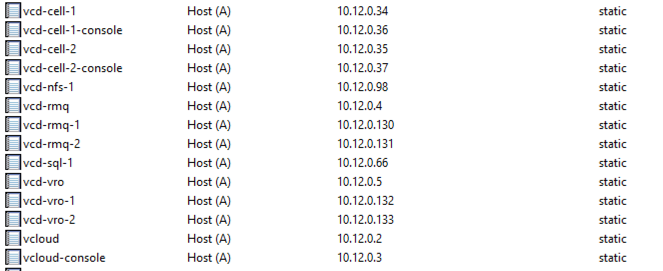

All the VMs that are part of the vCloud Director installation will require A and PTR (forward and reverse) lookup records

Notice that the VCD cells have two IPs per VM, one for the UI/API, and one for the Console traffic. Two records are also created for the load balancer URLs for vRealize Orchestrator, and RabbitMQ.

VM Sizing#

The vCloud Director cells, PostgreSQL database and RabbitMQ will be deployed using a standard CentOS7 template. vRealize Orchestrator is deployed as an appliance. The open-vm-tools package is installed on the template.

Vcd-sql-1. 2cpu. 4gb. 40gb

Updates#

All VMs have been updated using yum update -y

NTP#

All VMs are configured to use a default NTP source:

yum install -y ntp

systemctl enable ntpd

systemctl start ntpd

Disable SELINUX#

Replace SELINUX=enforcing with SELINUX=disabled in /etc/selinux/config and reboot

sed -i 's/^SELINUX=.*/SELINUX=disabled/g' /etc/selinux/config && cat /etc/selinux/config && reboot