I’ve done a fair amount of work learning VMware PKS and NSX-T, but I wanted to drop down a level and get more familiar with the inner workings for Kubernetes, as well as explore some of the newer features that are exposed by the NSX Container Plugin that are not yet in the PKS integrations.

The NSX-T docs are…not great, I certainly don’t think you can work out the steps required from the official NCP installation guide without a healthy dollop of background knowledge and familiarity with Kubernetes and CNI. Anthony Burke published this guide which is great, and I am lucky enough to be able to pick his brains on our corporate slack.

So, what follows is a step-by-step guide that is the product of my learning process - I can’t guarantee it’s perfect, but I’ve tried to write these steps in a logical order, validate them and add some explanation as I go through. If you spot a mistake, or an error, please feel free to get in touch (for my learning, and so I can correct it!)

Preparing the Nodes#

Software Versions#

| Component | Version | Notes |

|---|---|---|

| NSX-T | 2.4.1 | Latest at time of writing |

| Server OS | Ubuntu 16.04 | 16.04 is the most recent supported by NSX-T NCP |

| Docker | current | |

| Kubernetes | 1.13.5-00 | Required for NSX-T NCP |

| Open vSwitch | 2.9.1 | Must be the version packaged with NSX-T 2.4 |

Be sure to check compatibility requirements for the NSX Container Plug-in https://docs.vmware.com/en/VMware-NSX-T-Data-Center/2.4/com.vmware.nsxt.ncp_kubernetes.doc/GUID-AD7BA088-2A51-4D5F-BC86-6EE14CE17665.html

Ubuntu VMs#

Three Ubuntu 16.04 virtual machines deployed with 2 vCPU and 4GB RAM each. The VMs have been deployed as default installations, and this guide assumes the same.

DNS records have been created for each VM.

| Name | Role | FQDN | IP |

|---|---|---|---|

| k8s-m01 | Master | k8s-m01.definit.local | 10.0.0.10 |

| k8s-w01 | Worker | k8s-w01.definit.local | 10.0.0.11 |

| k8s-w02 | Worker | k8s-w02.definit.local | 10.0.0.12 |

Network adapters#

Each node is configured with three network adapters.

| Network | NIC | IP Addressing | Notes |

|---|---|---|---|

| Management | ens160 | 192.168.10.0/24 | VLAN backed network used for management access from the Kubernetes nodes to the NSX Manager |

| Kubernetes Access | ens192 | 10.0.0.0/24 | NSX-T segment (logical switch) that’s connected to the tier 1 router (t1-kubernetes) and provides access to Kubernetes nodes. Default route. |

| Kubernetes Transport | ens224 | None | NSX-T segment (logical switch) that provides a transport network. |

Example /etc/network/interfaces file:

iface lo inet loopback

auto lo

# management

auto ens160

iface ens160 inet static

address 192.168.10.90

netmask 255.255.255.0

# k8s-access

auto ens192

iface ens192 inet static

address 10.0.0.10

netmask 255.255.255.0

gateway 10.0.0.1

dns-nameservers 192.168.10.17

dns-search definit.local

# k8s-transport

auto ens224

iface ens224 inet manual

up ip link set ens224 upInstall Docker and Kubernetes#

Disable swap (required for kubelet to run), install Docker, and install Kubernetes 1.13.

Master and Worker Nodes#

# Disable swap

sudo swapoff -a

# Update and upgrade

sudo apt-get update && sudo apt-get upgrade -y

# Install docker

sudo apt-get install -y docker.io apt-transport-https curl

# Start and enable docker

sudo systemctl start docker.service

sudo systemctl enable docker.service

# Add the Kubernetes repository and key

echo "deb http://apt.kubernetes.io/ kubernetes-xenial main" | sudo tee -a /etc/apt/sources.list.d/kubernetes.list

curl -s https://packages.cloud.google.com/apt/doc/apt-key.gpg | sudo apt-key add -

# Update package lists

sudo apt-get update

# Install kubernetes 1.13 and hold the packages

sudo apt-get install -y kubelet=1.13.5-00 kubeadm=1.13.5-00 kubectl=1.13.5-00

sudo apt-mark hold kubelet kubeadm kubectlYou should also edit /etc/fstab and comment out the swap line to ensure the swap stays disabled

# /etc/fstab: static file system information.

#

# Use 'blkid' to print the universally unique identifier for a

# device; this may be used with UUID= as a more robust way to name devices

# that works even if disks are added and removed. See fstab(5).

#

# <file system> <mount point> <type> <options> <dump> <pass>

/dev/mapper/ubuntu--1604--k8s--vg-root / ext4 errors=remount-ro 0 1

# /boot was on /dev/sda1 during installation

UUID=0caaf4eb-734a-43f0-b4ef-b7b0a012abfc /boot ext2 defaults 0 2

#/dev/mapper/ubuntu--1604--k8s--vg-swap_1 none swap sw 0 0Install the CNI Plugin#

Download the NSX Container zip package to the home folder

Master and Worker Nodes#

# Validate apparmor is enables (expected output is "Y")

sudo cat /sys/module/apparmor/parameters/enabled

# Extract the NSX Container package

unzip nsx-container-2.4.1.13515827.zip

# Install the CNI Plugin

sudo dpkg -i nsx-container-2.4.1.13515827/Kubernetes/ubuntu_amd64/nsx-cni_2.4.1.13515827_amd64.debInstall Open vSwitch#

You must install the Open vSwitch package bundled with the NSX Container plugin

Master and Worker Nodes#

# Install dependencies

sudo apt-get install -y dkms libc6-dev make python python-six python-argparse

# Install OVS

sudo dpkg -i nsx-container-2.4.1.13515827/OpenvSwitch/xenial_amd64/openvswitch-datapath-dkms_2.10.2.13185890-1_all.deb nsx-container-2.4.1.13515827/OpenvSwitch/xenial_amd64/openvswitch-common_2.10.2.13185890-1_amd64.deb nsx-container-2.4.1.13515827/OpenvSwitch/xenial_amd64/openvswitch-switch_2.10.2.13185890-1_amd64.deb nsx-container-2.4.1.13515827/OpenvSwitch/xenial_amd64/libopenvswitch_2.10.2.13185890-1_amd64.debConfigure Open vSwitch#

Open vSwitch should be configured to use the network interface

Master and Worker Nodes#

# Create the OVS Bridge

sudo ovs-vsctl add-br br-int

# Assign the k8s-transport interface for overlay traffic

sudo ovs-vsctl add-port br-int ens224 -- set Interface ens224 ofport_request=1

# Bring the bridge and overlay interface up

sudo ip link set br-int up

sudo ip link set ens224 upConfigure NSX-T Resources#

NSX-T 2.4 introduces a new intent-based policy API, which is reflected in the changes in the user interface. Unfortunately the NCP does not use the new policy API, so the objects created for consumption by NCP must be created using the “Advanced Networking and Security” tab.

For all components, collect the object ID for later use.

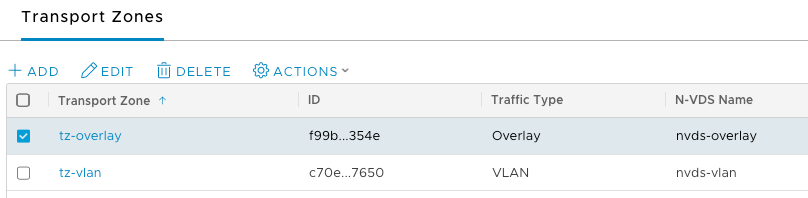

Overlay Transport Zone#

Create, or use an existing overlay transport zone

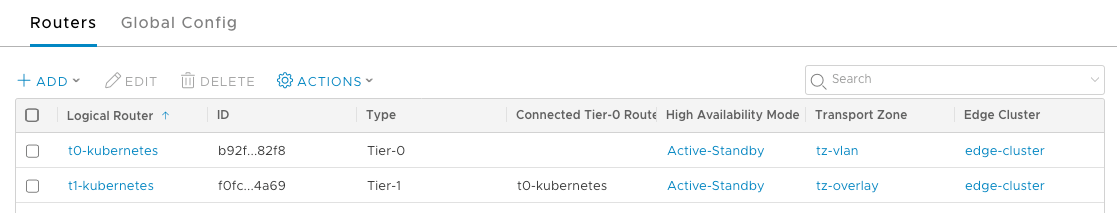

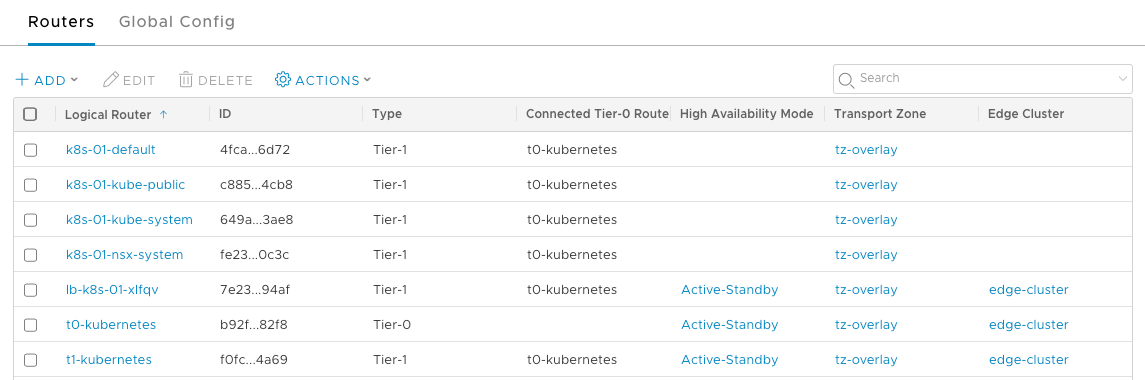

Routers#

Tier 0#

Deploy and configure a tier 0 router, this will be the point of ingress/egress to the NSX-T networks. The router must be created in active/standby mode, since stateful services are required (NAT).

Tier 1#

Deploy and configure a tier 1 router, this router will provide access to the Kubernetes cluster via the k8s-access segment created below. The tier 1 router should be connected to the tier 0 router, and a router port configured for the k8s-access segment.

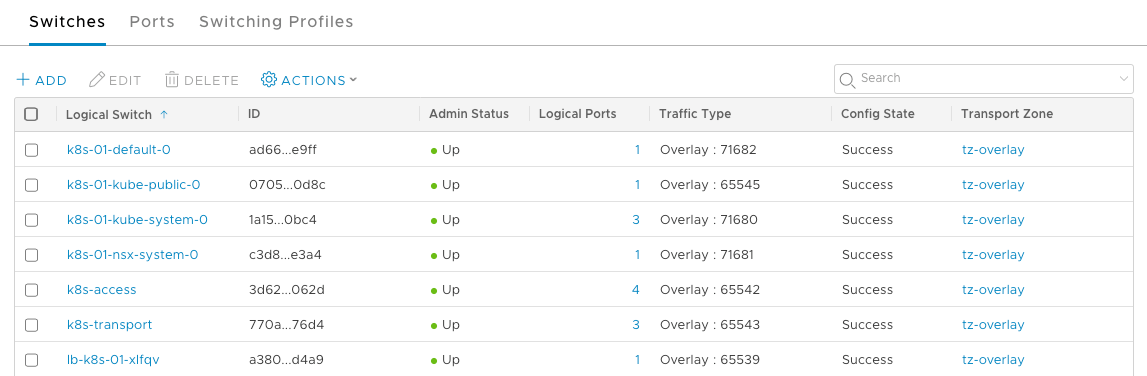

Segments#

k8s-transport#

The switch ports for the kubernetes nodes on the transport need to be tagged so that the NCP can identify them.

| Tag | Scope |

|---|---|

| <node name> | ncp/node_name |

| <kubernetes cluster name> | ncp/cluster |

k8s-access#

The k8s-access segment will be the API access to the Kubernetes cluster. It should be created and connected to the a router port on the tier 1 router.

IP Blocks#

| Name | CIDR | Notes |

|---|---|---|

| k8s-pod-network | 172.16.0.0/16 |

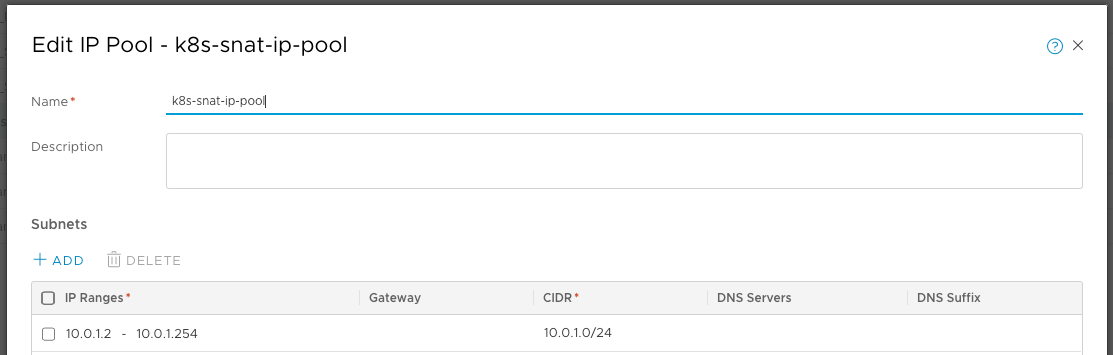

IP Pool for SNAT#

Create an IP pool in NSX Manager that is for allocating IP addresses for translating Pod IPs and Ingress controllers using SNAT/DNAT rules. These IP addresses are also referred to as external IPs.

| Name | Range | CIDR |

|---|---|---|

| k8s-snat | 10.0.1.1-10.0.1.254 | 10.0.1.0/24 |

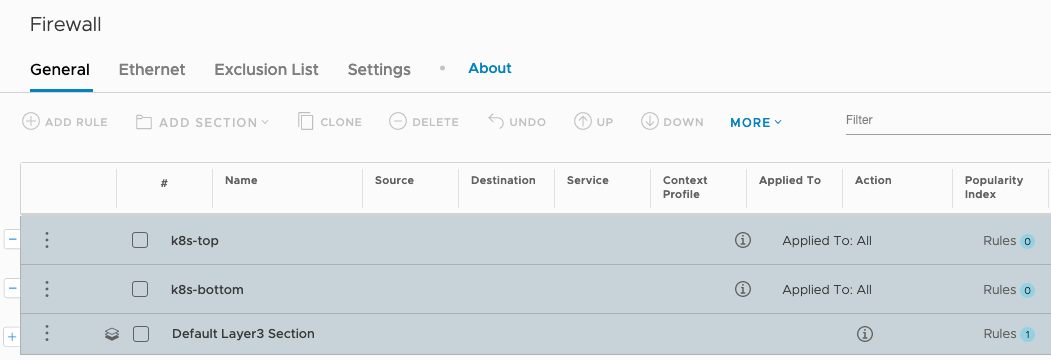

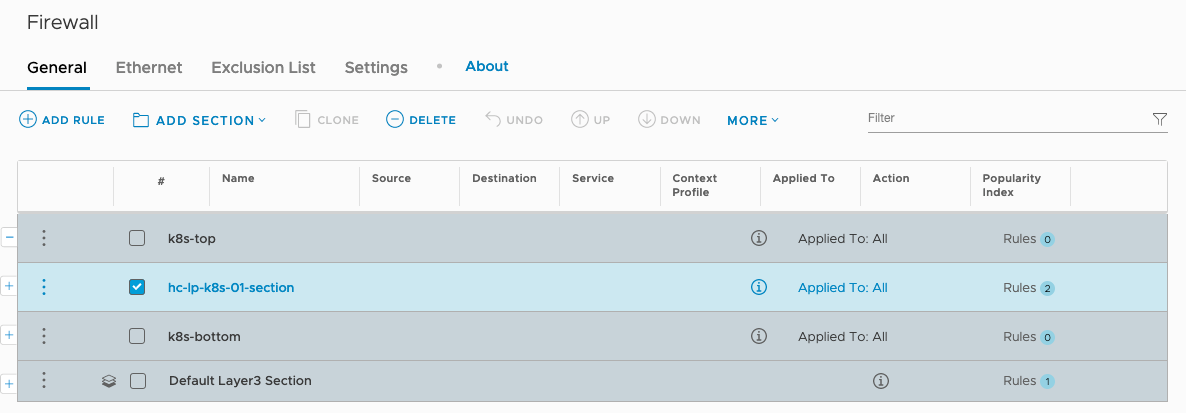

Firewall#

The NCP plugin creates firewall rules for pod isolation and policy for access between a “top” and “bottom” marker section. By default, if these marker sections are not created, all isolation rules will be created at the bottom of the list, and all access policy sections will be created at the top. If you want to control the rule positions (why wouldn’t you?!) you need to create some markers.

Collect the component IDs to use later in the NCP configuration

| Component | Name | ID |

|---|---|---|

| Tier 0 Router | t0-kubernetes | b92fbb43-b664-46e9-bd4a-d8d46dac82f8 |

| Overlay Transport Zone | tz-overlay | f99ba556-eafe-4c23-beaf-8df1723a354e |

| Pod IP Block | k8s-pod-network | ab81892f-eb9a-4248-8606-b26f2293fa83 |

| External IP Pool | k8s-snat-ip-pool | dde463fb-15d9-456f-aa3d-030e24f1b1db |

| Top Firewall Marker | k8s-top | f887ba4f-d634-44ff-8338-05c4a214cc93 |

| Bottom Firewall Marker | k8s-bottom | 820da106-a35c-43c0-bcf0-edaf94004d9e |

Create the Kubernetes Cluster#

Initialise the Cluster#

Master Node#

# Initialise the cluster using the master node's k8s-access IP address

sudo kubeadm init --apiserver-advertise-address=10.0.0.10

# Copy the Kubernetes config to the non-root user

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/configJoin the Cluster from the worker nodes#

Grab the join command from the output of the master node init command

Worker Nodes#

# Join each node

sudo kubeadm join 10.0.0.10:6443 --token u1kyen.mr7aeihrkkuz597h --discovery-token-ca-cert-hash sha256:f214d9abdadd1752405250bc99c69dee4d51d24d1940b8984948c4bee7c382a3Configuring NCP#

Load the NCP Docker Image#

Import the NCP docker image to the local repository on each Kubernetes node. The image will be loaded with a long and ugly name “registry.local/2.4.1.13515827/nsx-ncp-ubuntu”, so to keep the config files simple and to help with upgrades later, tag the image to create an alias.

Master and Worker Nodes#

sudo docker load -i nsx-container-2.4.1.13515827/Kubernetes/nsx-ncp-ubuntu-2.4.1.13515827.tar

sudo docker image tag registry.local/2.4.1.13515827/nsx-ncp-ubuntu nsx-ncp-ubuntu:2.4.1.13515827Now you can refer to the image as nsx-ncp-ubuntu:2.4.1.13515827 in the config files.

Create a namespace, roles, service accounts#

The NCP pod can run in the default or kube-system namespaces, however creating a namespace for pods to run in is considered a best practice and allows more granular control over access and permissions.

# Create Namespace for NSX resources

kind: Namespace

apiVersion: v1

metadata:

name: nsx-systemThe recommended RBAC policy for the NCP pod is documented under Security Considerations in the NSX-T NCP documentation. We can modify this policy to create the namespace, service account and role resources.

The first ServiceAccount we create is called ncp-svc along with two ClusterRoles ncp-cluster-role and ncp-patch-role. The two roles are assigned to the new ServiceAccount using two ClusterRoleBinding. As the name suggests the account is used to run processes in the NCP pod.

Figuring out these YAML files can be daunting, especially when you’re just getting started like me. I found the official documentation pretty useful to understand how Kubernetes service accounts and roles interact - see Managing Service Accounts.

# Create a ServiceAccount for NCP namespace

apiVersion: v1

kind: ServiceAccount

metadata:

name: ncp-svc

namespace: nsx-system

---

# Create ClusterRole for NCP

kind: ClusterRole

# Set the apiVersion to rbac.authorization.k8s.io/v1beta1 when k8s < v1.8

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: ncp-cluster-role

rules:

- apiGroups:

- ""

- extensions

- networking.k8s.io

resources:

- deployments

- endpoints

- pods

- pods/log

- networkpolicies

# Move 'nodes' to ncp-patch-role when hyperbus is disabled.

- nodes

- replicationcontrollers

# Remove 'secrets' if not using Native Load Balancer.

- secrets

verbs:

- get

- watch

- list

---

# Create ClusterRole for NCP to edit resources

kind: ClusterRole

# Set the apiVersion to rbac.authorization.k8s.io/v1beta1 when k8s < v1.8

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: ncp-patch-role

rules:

- apiGroups:

- ""

- extensions

resources:

# NCP needs to annotate the SNAT errors on namespaces

- namespaces

- ingresses

- services

verbs:

- get

- watch

- list

- update

- patch

# NCP needs permission to CRUD custom resource nsxerrors

- apiGroups:

# The api group is specified in custom resource definition for nsxerrors

- nsx.vmware.com

resources:

- nsxerrors

- nsxnetworkinterfaces

- nsxlocks

verbs:

- create

- get

- list

- patch

- delete

- watch

- update

- apiGroups:

- ""

- extensions

- nsx.vmware.com

resources:

- ingresses/status

- services/status

- nsxnetworkinterfaces/status

verbs:

- replace

- update

- patch

---

# Bind ServiceAccount created for NCP to its ClusterRole

kind: ClusterRoleBinding

# Set the apiVersion to rbac.authorization.k8s.io/v1beta1 when k8s < v1.8

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: ncp-cluster-role-binding

roleRef:

# Comment out the apiGroup while using OpenShift

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: ncp-cluster-role

subjects:

- kind: ServiceAccount

name: ncp-svc

namespace: nsx-system

---

# Bind ServiceAccount created for NCP to the patch ClusterRole

kind: ClusterRoleBinding

# Set the apiVersion to rbac.authorization.k8s.io/v1beta1 when k8s < v1.8

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: ncp-patch-role-binding

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: ncp-patch-role

subjects:

- kind: ServiceAccount

name: ncp-svc

namespace: nsx-systemThe second ServiceAccount we need to create is called nsx-agent-svc, which is used to run the NSX Node Agent Pod. We create a ClusterRole to assign the required permissions, and then another ClusterRoleBinding to assign the account to the role.

# Create a ServiceAccount for nsx-node-agent

apiVersion: v1

kind: ServiceAccount

metadata:

name: nsx-agent-svc

namespace: nsx-system

---

# Create ClusterRole for nsx-node-agent

kind: ClusterRole

# Set the apiVersion to rbac.authorization.k8s.io/v1beta1 when k8s < v1.8

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nsx-agent-cluster-role

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

# Uncomment the following resources when hyperbus is disabled

# - nodes

# - pods

verbs:

- get

- watch

- list

---

# Bind ServiceAccount created for nsx-node-agent to its ClusterRole

kind: ClusterRoleBinding

# Set the apiVersion to rbac.authorization.k8s.io/v1beta1 when k8s < v1.8

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nsx-agent-cluster-role-binding

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: nsx-agent-cluster-role

subjects:

- kind: ServiceAccount

name: nsx-agent-svc

namespace: nsx-systemSave the namespace, NCP and NSX Agent config as a single YAML file called nsx-ncp-rbac.yml, and then deploy the new resources using the following command:

Master Node or Admin Workstation#

kubectl apply -f nsx-ncp-rbac.ymlPrepare the NCP config file#

In order to deploy the NCP we use a YAML config file that’s included in the NSX Container Plugin zip package. This consists of a ConfigMap and a Deployment. These specify the configuration data required to run the application (in this case NCP) and the pod deployment details.

# Copy the ncp-deployment template from the NSX Container Plugin folder

cp nsx-container-2.4.1.13515827/Kubernetes/ncp-deployment.yml . Next we need to edit the settings to match our environment - the file below has all the comments removed for clarity but I suggest reading through them all.

- Be sure to add the namespace

- cluster must match the value created in the NSX ncp/cluster tags

- I’m using password authentication for NSX in my lab…don’t do this in production! Configure TLS Certificates

- All the component IDs below are the ones collected when we created the NSX components

- Be sure to update the serviceAccountName value with the ServiceAccount created in the RBAC

- Make sure the image value is updated with the tagged name in the docker local registry

apiVersion: v1

kind: ConfigMap

metadata:

name: nsx-ncp-config

namespace: nsx-system

labels:

version: v1

data:

ncp.ini: |

[DEFAULT]

[coe]

cluster = k8s-01

nsxlib_loglevel=INFO

enable_snat = True

node_type = HOSTVM

[ha]

[k8s]

apiserver_host_ip = 10.0.0.10

apiserver_host_port = 6443

ca_file = /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

client_token_file = /var/run/secrets/kubernetes.io/serviceaccount/token

[nsx_v3]

nsx_api_managers = nsx-manager.definit.local

nsx_api_user = admin

nsx_api_password = VMware1!

insecure = True

top_tier_router = b92fbb43-b664-46e9-bd4a-d8d46dac82f8

overlay_tz = tz-overlay

subnet_prefix = 24

use_native_loadbalancer = True

l4_lb_auto_scaling = True

default_ingress_class_nsx = True

pool_algorithm = 'ROUND_ROBIN'

service_size = 'SMALL'

l7_persistence = 'source_ip'

l4_persistence = 'source_ip'

container_ip_blocks = ab81892f-eb9a-4248-8606-b26f2293fa83

external_ip_pools = dde463fb-15d9-456f-aa3d-030e24f1b1db

top_firewall_section_marker = f887ba4f-d634-44ff-8338-05c4a214cc93

bottom_firewall_section_marker = 820da106-a35c-43c0-bcf0-edaf94004d9e

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: nsx-ncp

namespace: nsx-system

labels:

tier: nsx-networking

component: nsx-ncp

version: v1

spec:

replicas: 1

template:

metadata:

labels:

tier: nsx-networking

component: nsx-ncp

version: v1

spec:

hostNetwork: true

serviceAccountName: ncp-svc

containers:

- name: nsx-ncp

image: nsx-ncp-ubuntu:2.4.1.13515827

imagePullPolicy: IfNotPresent

env:

- name: NCP_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: NCP_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

livenessProbe:

exec:

command:

- /bin/sh

- -c

- timeout 5 check_pod_liveness nsx-ncp

initialDelaySeconds: 5

timeoutSeconds: 5

periodSeconds: 10

failureThreshold: 5

volumeMounts:

- name: config-volume

mountPath: /etc/nsx-ujo/ncp.ini

subPath: ncp.ini

readOnly: true

volumes:

- name: config-volume

configMap:

name: nsx-ncp-configPrepare the NSX Node Agent config file#

For the NSX Node Agent, we also need to prepare a YAML config file consisting of a ConfigMap and Deployment.

# Copy the NSX Node Agent YAML config

cp nsx-container-2.4.1.13515827/Kubernetes/ubuntu_amd64/nsx-node-agent-ds.yml .Again I’ve removed the comments to make it clearer but they’re useful to read.

- Be sure to add the namespace

- cluster must match the value created in the NSX ncp/cluster tags

- ovs_bridge must match the bridge created earlier

- Be sure to update the serviceAccountName value with the ServiceAccount created in the RBAC

- Make sure the image value is updated with the tagged name in the docker local registry

apiVersion: v1

kind: ConfigMap

metadata:

name: nsx-node-agent-config

namespace: nsx-system

labels:

version: v1

data:

ncp.ini: |

[DEFAULT]

[coe]

cluster = k8s-01

nsxlib_loglevel=INFO

enable_snat = True

node_type = HOSTVM

[ha]

[k8s]

apiserver_host_ip = 10.0.0.10

apiserver_host_port = 6443

ca_file = /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

client_token_file = /var/run/secrets/kubernetes.io/serviceaccount/token

[nsx_node_agent]

ovs_bridge = br-int

[nsx_kube_proxy]

---

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: nsx-node-agent

namespace: nsx-system

labels:

tier: nsx-networking

component: nsx-node-agent

version: v1

spec:

updateStrategy:

type: RollingUpdate

template:

metadata:

annotations:

container.apparmor.security.beta.kubernetes.io/nsx-node-agent: localhost/node-agent-apparmor

labels:

tier: nsx-networking

component: nsx-node-agent

version: v1

spec:

hostNetwork: true

serviceAccountName: nsx-agent-svc

containers:

- name: nsx-node-agent

image: nsx-ncp-ubuntu:2.4.1.13515827

imagePullPolicy: IfNotPresent

command: ["start_node_agent"]

livenessProbe:

exec:

command:

- /bin/sh

- -c

- timeout 5 check_pod_liveness nsx-node-agent

initialDelaySeconds: 5

timeoutSeconds: 5

periodSeconds: 10

failureThreshold: 5

securityContext:

capabilities:

add:

- NET_ADMIN

- SYS_ADMIN

- SYS_PTRACE

- DAC_READ_SEARCH

volumeMounts:

- name: config-volume

mountPath: /etc/nsx-ujo/ncp.ini

subPath: ncp.ini

readOnly: true

- name: openvswitch

mountPath: /var/run/openvswitch

- name: cni-sock

mountPath: /var/run/nsx-ujo

- name: netns

mountPath: /var/run/netns

- name: proc

mountPath: /host/proc

readOnly: true

- name: nsx-kube-proxy

image: nsx-ncp-ubuntu:2.4.1.13515827

imagePullPolicy: IfNotPresent

command: ["start_kube_proxy"]

livenessProbe:

exec:

command:

- /bin/sh

- -c

- timeout 5 check_pod_liveness nsx-kube-proxy

initialDelaySeconds: 5

periodSeconds: 5

securityContext:

capabilities:

add:

- NET_ADMIN

- SYS_ADMIN

- SYS_PTRACE

- DAC_READ_SEARCH

volumeMounts:

- name: config-volume

mountPath: /etc/nsx-ujo/ncp.ini

subPath: ncp.ini

readOnly: true

- name: openvswitch

mountPath: /var/run/openvswitch

volumes:

- name: config-volume

configMap:

name: nsx-node-agent-config

- name: cni-sock

hostPath:

path: /var/run/nsx-ujo

- name: netns

hostPath:

path: /var/run/netns

- name: proc

hostPath:

path: /proc

- name: openvswitch

hostPath:

path: /var/run/openvswitchFinally…deploy NCP and NSX Node Agent!#

After what seems like a long journey, we’re finally at the point where we can deploy the NCP and Node Agent.

Master Node or Admin Workstation#

kubectl apply -f ncp-deployment.yml

kubectl apply -f nsx-node-agent-ds.ymlAll being well, we should now have NCP configured and ready to go, switch to the

nsx-system namespace (check out kubens for

this!) and view the resources using kubectl get <resource> and kubectl describe <resource> <resource name>

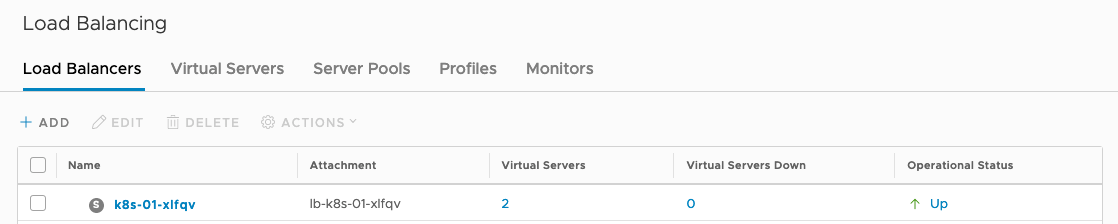

NCP will create components for each Kubernetes namespace, these can be viewed through the Advanced Networking and Security Tab.