Using GitLab Pipelines to deploy Hugo sites to AWS

I’ve posted previously about moving to Hugo as a publishing platform for this blog, this post is a bit more about how I’m managing the publishing using GitLab’s CI/CD Pipelines.

Firstly, I need to mention that I’m using three different repositories for my code base, and why. The three repositories are:

- definit-hugo - this contains the hugo site configuration

- definit-content - this contains the site content - markdown files, images etc

- definit-theme - this contains the VMware Clarity-based theme I use for my site

definit-content and definit-theme are git submodules in the definit-hugo project, mapped into the /content and /themes folders respectively. This allows me to keep the configuration, content and theme separate, and to manage them as separate entities. The aim is that the theme will eventually be in a position to be released, and I don’t want to have to extract it from my hugo code base later on.

Using submodules in this way throws up a couple of challenges when I’m triggering a build pipeline, which I’ll get into later on.

At a high level, I want my publishing process to be something like this:

- Write a post in markdown

- Commit and push to the definit-content repo

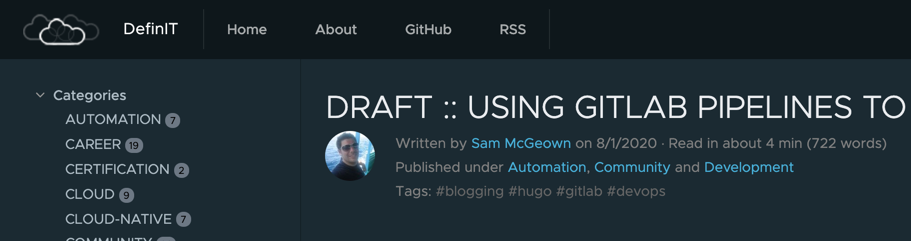

- Build the staging site with draft and posts to be published in the future

- Push the staging site to AWS S3

- Build the production site

- Push the production site to AWS S3

- Invalidate the AWS CloudFront cache

The reason for pushing drafts and future posts to staging is to provide a bit of a preview without needing to have hugo running locally. I tend to use hugo server while I’m writing to preview changes, but others (cough Simon cough) don’t necessarily have the same setup or knowledge as me - and that’s fair enough, he knows plenty of stuff that I don’t, that’s why he’s a great co-author on this blog.

Configuring the Hugo Build Pipeline in GitLab

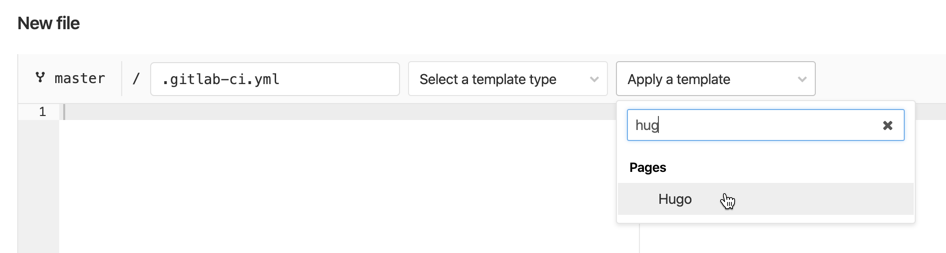

GitLab CI/CD pipelines are configured using a .gitlab-ci.yml file in the root of the repository. This file is used to describe the various stages of the build, and is automatically triggered when changes are committed to the repository. Although it’s just a YAML file, I found the syntax to be a little daunting at first, but fortunately there’s an extensive Pipeline Configuration Reference and plenty of templates to get you started. I started with the Hugo template provided.

In the definit-hugo repository, I have created some variables (Settings > CI/CD > Variables) to hold the more sensitive data:

| Key | Value |

|---|---|

| AWS_ACCESS_KEY_ID | My AWS API access key ID |

| AWS_SECRET_ACCESS_KEY | My AWS API access key |

| AWS_DEFAULT_REGION | The AWS region my S3 bucket resides |

| AWS_S3_Staging_Bucket | The name of the Staging site S3 bucket |

| AWS_S3_Production_Bucket | The name of the Production site S3 bucket |

These variables are used in the .gitlab-ci.yml file below, which I’ve commented heavily to show what’s happening.

| |

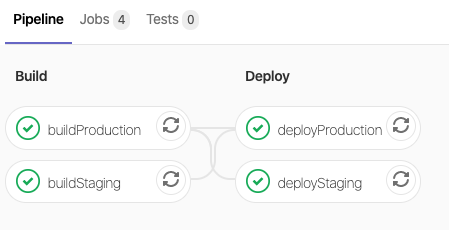

Commiting the .gitlab-ci.yml file to the definit-hugo repository actually triggers the pipeline.

When the draft: true property is set in the hugo content’s front matter, the draft content is built and released to the staging environment, but not the “live” blog thanks to the --buildDrafts flag:

All good so far, but there’s a problem here. I want to commit new posts to the definit-content repository, not the definit-hugo repository, so I need to create a pipeline on the definit-content repository to trigger the pipeline in the definit-hugo project. Fortunately GitLab makes this easy using the trigger syntax, simply creating a job with the trigger action and the path to my definit-hugo project.

| |

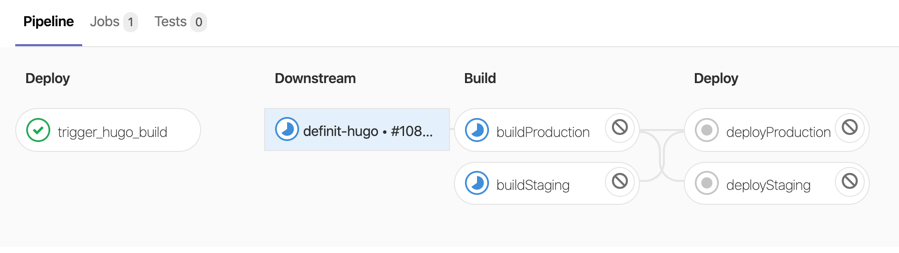

Now when there’s a commit to the definit-content projcet the pipeline triggers the downstream build, and all the downstream steps are visible in the pipeline: